In a context where flexibility and responsiveness have become strategic imperatives, serverless architecture emerges as a natural evolution of the cloud. Beyond the myth of “serverless,” it relies on managed services (Function as a Service – FaaS, Backend as a Service – BaaS) capable of dynamically handling events and automatically scaling to match load spikes.

For mid- to large-sized enterprises, serverless transforms the cloud’s economic model, shifting from provisioning-based billing to a pay-per-execution approach. This article unpacks the principles of serverless, its business impacts, the constraints to master, and its prospects with edge computing, artificial intelligence, and multi-cloud architectures.

Understanding Serverless Architecture and Its Foundations

Serverless is based on managed services where cloud providers handle maintenance and infrastructure scaling. It enables teams to focus on business logic and design event-driven, decoupled, and modular applications.

The Evolution from Cloud to Serverless

The first generations of cloud were based on Infrastructure as a Service (IaaS), where organizations managed virtual machines and operating systems.

Serverless, by contrast, completely abstracts the infrastructure. On-demand functions (FaaS) or managed services (BaaS) execute code in response to events, without the need to manage scaling, patching, or server orchestration.

This evolution results in a drastic reduction of operational tasks and fine-grained execution: each invocation triggers billing as close as possible to actual resource consumption, similar to the migration to microservices.

Key Principles of Serverless

The event-driven model is at the heart of serverless. Any action—HTTP request, file upload, message in a queue—can trigger a function, delivering high responsiveness to microservices architectures.

Abstracting containers and instances makes the approach cloud-native: functions are packaged and isolated quickly, ensuring resilience and automatic scaling.

The use of managed services (storage, NoSQL databases, API gateway) enables construction of a modular ecosystem. Each component can be updated independently without impacting overall availability, following API-first integration best practices.

Concrete Serverless Use Case

A retail company offloaded its order-terminal event processing to a FaaS platform. This eliminated server management during off-peak hours and handled traffic surges instantly during promotional events.

This choice proved that a serverless platform can absorb real-time load variations without overprovisioning, while simplifying deployment cycles and reducing points of failure.

The example also demonstrates the ability to iterate rapidly on functions and integrate new event sources (mobile, IoT) without major rewrites.

Business Benefits and Economic Optimization of Serverless

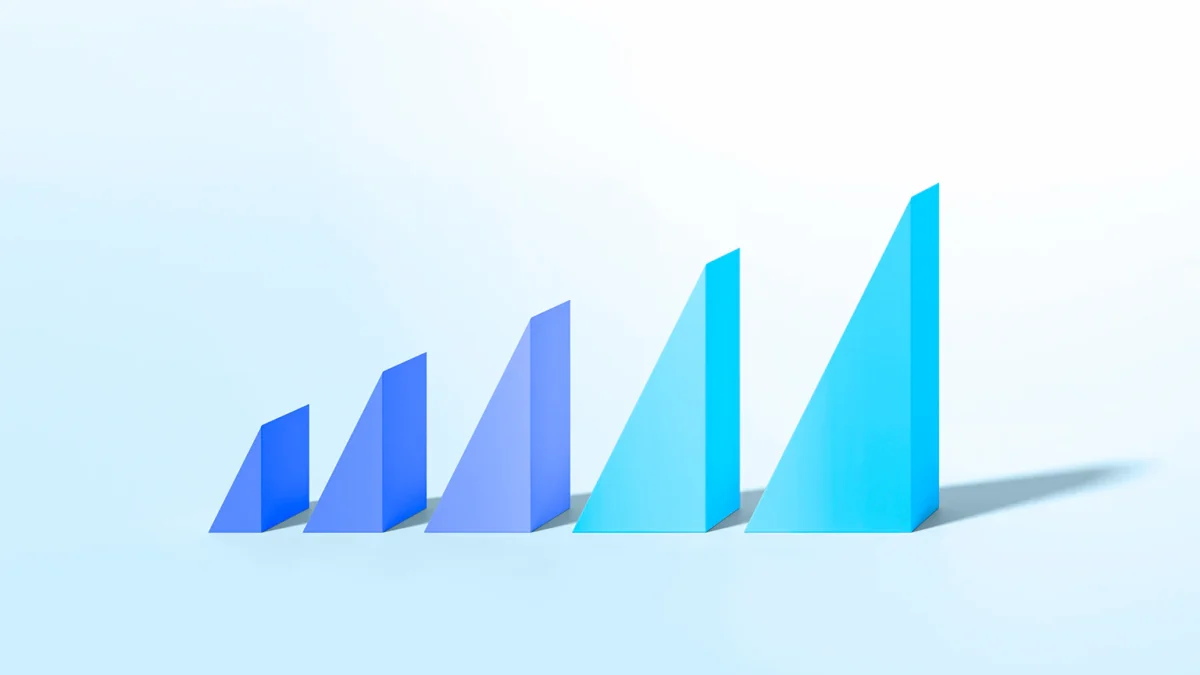

Automatic scalability guarantees continuous availability, even during exceptional usage spikes. The pay-per-execution model optimizes costs by aligning billing directly with your application’s actual consumption.

Automatic Scalability and Responsiveness

With serverless, each function runs in a dedicated environment spun up on demand. As soon as an event occurs, the provider automatically provisions the required resources.

This capability absorbs activity peaks without manual forecasting or idle server costs, ensuring a seamless service for end users and uninterrupted experience despite usage variability.

Provisioning delays—typically measured in milliseconds—ensure near-instantaneous scaling, which is critical for mission-critical applications and dynamic marketing campaigns.

Execution-Based Economic Model

Unlike IaaS, where billing is based on continuously running instances, serverless charges only for execution time and the memory consumed by functions.

This granularity can reduce infrastructure costs by up to 50% depending on load profiles, especially for intermittent or seasonal usage.

Organizations gain clearer budget visibility since each function becomes an independent expense item, aligned with business objectives rather than technical asset management, as detailed in our guide to securing an IT budget.

Concrete Use Case

A training organization migrated its notification service to a FaaS backend. Billing dropped by over 40% compared to the previous dedicated cluster, demonstrating the efficiency of the pay-per-execution model.

This saving allowed reallocation of part of the infrastructure budget toward developing new educational modules, directly fostering business innovation.

The example also shows that minimal initial adaptation investment can free significant financial resources for higher-value projects.

{CTA_BANNER_BLOG_POST}

Constraints and Challenges to Master in the Serverless Approach

Cold starts can impact initial function latency if not anticipated. Observability and security require new tools and practices for full visibility and control.

Cold Starts and Performance Considerations

When a function hasn’t been invoked for a period, the provider must rehydrate it, causing a “cold start” delay that can reach several hundred milliseconds.

In real-time or ultra-low-latency scenarios, this impact can be noticeable and must be mitigated via warming strategies, provisioned concurrency, or by combining functions with longer-lived containers.

Code optimization (package size, lightweight dependencies) and memory configuration also influence startup speed and overall performance.

Observability and Traceability

The serverless microservices segmentation complicates event correlation. Logs, distributed traces, and metrics must be centralized using appropriate tools (OpenTelemetry, managed monitoring services) and visualized in an IT performance dashboard.

Concrete Use Case

A government agency initially suffered from cold starts on critical APIs during off-peak hours. After enabling warming and adjusting memory settings, latency dropped from 300 to 50 milliseconds.

This lesson demonstrates that a post-deployment tuning phase is essential to meet public service performance requirements and ensure quality of service.

The example highlights the importance of proactive monitoring and close collaboration between cloud architects and operations teams.

Toward the Future: Edge, AI, and Multi-Cloud Serverless

Serverless provides an ideal foundation for deploying functions at the network edge, further reducing latency and processing data close to its source. It also simplifies on-demand integration of AI models and orchestration of multi-cloud architectures.

Edge Computing and Minimal Latency

By combining serverless with edge computing, you can execute functions in points of presence geographically close to users or connected devices.

This approach reduces end-to-end latency and limits data flows to central datacenters, optimizing bandwidth and responsiveness for critical applications (IoT, video, online gaming), while exploring hybrid cloud deployments.

Serverless AI: Model Flexibility

Managed machine learning services (inference, training) can be invoked in a serverless mode, eliminating the need to manage GPU clusters or complex environments.

Pre-trained models for image recognition, translation, or text generation become accessible via FaaS APIs, enabling transparent scaling as request volumes grow.

This modularity fosters innovative use cases such as real-time video analytics or dynamic recommendation personalization, without heavy upfront investment, as discussed in our article on AI in the enterprise.

Concrete Use Case

A regional authority deployed an edge-based image analysis solution combining serverless and AI to detect anomalies and incidents in real time from camera feeds.

This deployment reduced network load by 60% by processing streams locally, while ensuring continuous model training through multi-cloud orchestration.

The case highlights the synergy between serverless, edge, and AI in addressing public infrastructure security and scalability needs.

Serverless Architectures: A Pillar of Your Agility and Scalability

Serverless architecture reconciles rapid time-to-market, economic optimization, and automatic scaling, while opening the door to innovations through edge computing and artificial intelligence. The main challenges—cold starts, observability, and security—can be addressed with tuning best practices, distributed monitoring tools, and compliance measures.

By adopting a contextualized approach grounded in open source and modularity, each organization can build a hybrid ecosystem that avoids vendor lock-in and ensures performance and longevity.

Our experts at Edana support companies in defining and implementing serverless architectures, from the initial audit to post-deployment tuning. They help you design resilient, scalable solutions perfectly aligned with your business challenges.