Summary – The rise of LLMs creates a new attack surface, exposing your IT system to prompt injection, data leaks, RAG corpus poisoning, autonomous agents, and supply chain vulnerabilities. To secure it, isolate instructions and data, restrict permissions, filter inputs and outputs, log every flow, and conduct red teaming exercises.

Solution : establish a technical foundation and formal AI governance for controlled deployment.

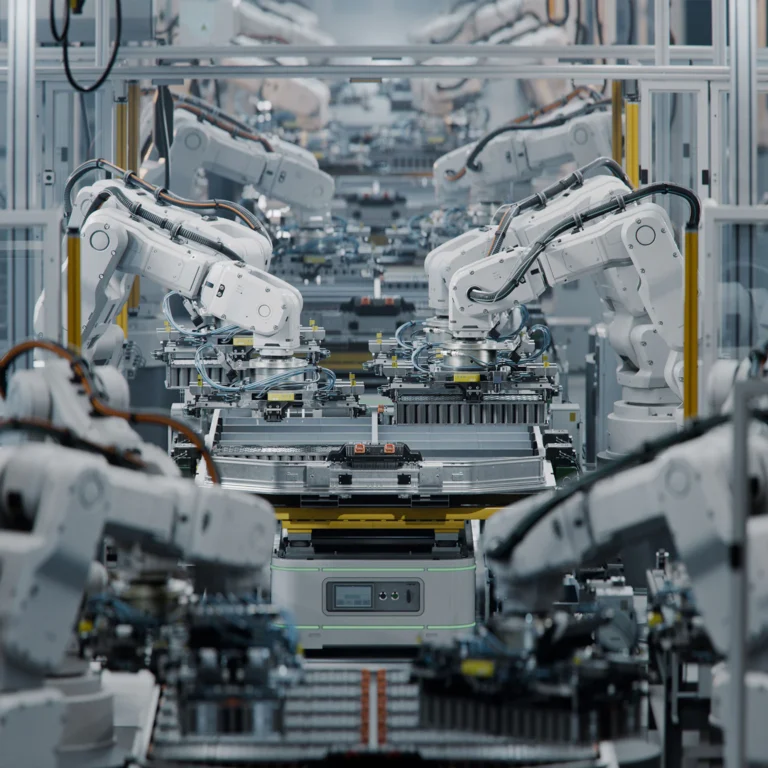

Large language models (LLMs) are often perceived as black boxes intended to generate text or moderate prompts. This reductive view overlooks the complexity of an LLM system in the enterprise, which involves data streams, connectors, third-party models, agents, and workflows.

Beyond preventing a few “jailbreak” cases, LLM security must be approached as a new application and organizational attack surface. This article details the concrete risks — prompt injection, data leakage, retrieval-augmented generation (RAG) knowledge-base poisoning, excessive agent autonomy, resource overconsumption, supply-chain vulnerabilities — and proposes a pragmatic foundation of technical, organizational, and governance safeguards.

A New Attack Surface: LLM as a Complete System

LLMs are not simple text-generation APIs. They integrate into workflows, access data, trigger agents, and can potentially modify information systems. Securing an LLM therefore means protecting a set of components and data flows, not just moderating its outputs.

Example: A large financial services firm had configured an internal chatbot without restricting access to its document repositories, exposing sensitive client information. This incident shows that a lack of fine-grained access control turns AI into a leakage vector.

Infrastructure and Connectors

LLM deployment generally involves connectors to database management systems (DBMS), enterprise content management (ECM) platforms, and third-party APIs. Each connection point can become an entryway for an attacker if not robustly secured and authenticated. Token- or certificate-based authentication mechanisms must be implemented and regularly audited. This architecture often relies on dedicated middleware to orchestrate exchanges.

Cloud environments introduce additional risks: misconfigured storage buckets or identity and access management (IAM) permissions can expose critical data. In production, the principle of least privilege applies to both users and LLM services to limit any privilege escalation.

Finally, monitoring data flows is essential to detect abnormal requests or unusual traffic volumes. Continuously configured observability tools can alert on overloads, unprecedented access attempts, or schema changes.

Access Rights and Data Flows

LLMs may be authorized to read from or write to various systems: customer relationship management (CRM), enterprise content management (ECM), and enterprise resource planning (ERP). Poor rights management can lead to unintended queries, such as the disclosure of confidential documents via an apparently innocuous prompt. Roles should be defined by business profile and reviewed periodically.

Logging LLM access and queries is a cornerstone of the security strategy. Every call to a document corpus and every text generation must be traced. In case of an incident, these logs facilitate forensic analysis and feedback to filtering mechanisms.

A preliminary input-filtering layer helps validate the consistency of incoming data. Rather than focusing solely on output moderation, this step blocks malformed or unusual prompts before they reach the model.

Third-Party Models and Supply Chain

LLMs often rely on open-source or proprietary models, as well as vector libraries or external indexing services. Each external component can hide vulnerabilities or malicious code. It is crucial to verify cryptographic integrity of artifacts through signatures and checksums.

An unvalidated update can introduce unexpected behavior or a backdoor. A model and container validation process—similar to a continuous integration/continuous deployment (CI/CD) pipeline—enables automatic security and compliance testing before deployment.

Establishing an internal registry of approved models prevents the use of unverified versions. A private repository, coupled with controlled deployment policies, ensures that only validated artifacts reach production.

Classic Attacks: Prompt Injection and Data Leakage

Prompt injection allows an attacker to alter the model’s behavior to execute commands or exfiltrate data. Data leaks occur when the LLM reproduces or correlates unfiltered sensitive information.

Example: An industrial manufacturer had indexed all of its client contracts without verification for an internal assistant. A simple prompt injection enabled extraction of confidential clauses, which were then displayed in plaintext in the logs, demonstrating that a lack of granular RAG data control leads to severe leaks.

Prompt Injection: Mechanisms and Consequences

Prompt injection happens when a malicious user inserts a hidden instruction into the prompt to hijack the LLM’s behavior. Such an attack can force the model to reveal its internal context or perform unintended actions. Attacks can be subtle and difficult to detect if contextual validation is insufficient.

Consequences range from leaking confidential recommendations to corrupting entire workflows. For example, an LLM driving a report-generation pipeline might inject biased calculations or links to unvalidated scripts, compromising the integrity of enterprise data.

Traditional keyword-based filters are not enough. Paraphrasing techniques or prompt polymorphism easily bypass these defenses. Contextual validation combined with linguistic sandboxing offers a more robust approach.

Sensitive Data Leakage

When the model has broad access to internal documents, it may return critical excerpts without understanding the impact. A simple prompt asking “summarize the key points” can expose segments protected by trade secrets or reveal personal data subject to regulation.

An output-filtering mechanism should be implemented alongside preliminary moderation. It compares generated content against corporate classification rules, automatically blocking or anonymizing sensitive fragments.

Segmentation of RAG indexes is also recommended: separating high-risk data (patents, contracts, medical records) from low-criticality information (public technical documentation) limits the impact of potential leaks and simplifies monitoring.

RAG Knowledge-Base Poisoning

Knowledge-base poisoning involves injecting malicious or erroneous information into the repository. When the LLM uses this data to respond, answers become corrupted, degrading service trust, quality, and security.

Provenance tracking must be implemented for every vector or indexed document. A hash, creation date, and source identifier allow rejecting any element that does not meet governance criteria.

Regular manual reviews of new ingested documents, combined with random sampling and linguistic consistency metrics, quickly detect anomalies and prevent the LLM agent from relying on corrupted data.

Edana: strategic digital partner in Switzerland

We support companies and organizations in their digital transformation

Emerging Risks: Autonomous Agents and Unbounded Resource Usage

AI agents can take uncontrolled initiatives and modify the information system without validation. Excessive resource consumption can incur unexpected costs and service disruptions.

Excessive Agent Autonomy

Certain scenarios pair an LLM with agents capable of executing commands in the information system, such as sending emails, managing tickets, or updating data. Without constraints, these agents may operate outside intended boundaries, generating erroneous or malicious actions.

Permissions granted to each agent must be strictly limited. An agent tasked with synthesizing reports should not trigger production workflows or alter user permissions. This separation of duties prevents escalation of impact in case of compromise.

A human-in-the-loop validation layer must be introduced for any sensitive action. Critical workflows—such as executing updates or publishing external content—require explicit approval before execution.

Resource Overconsumption and Internal Denial of Service

Unrestricted use of an LLM can lead to excessive CPU/GPU consumption, impacting other services and degrading overall performance. Poorly calibrated automatic query loops are especially dangerous.

Implementing query quotas and resource thresholds at the API and infrastructure levels allows automatic blocking of abnormal usage. Dynamic rules adjust these limits based on business priority levels.

Proactive alerts based on observability data (metrics, traces, logs) inform IT teams as soon as a session exceeds a critical threshold. Coupled with rapid response playbooks, they ensure effective remediation.

Supply Chain Weaknesses

End-to-end dependencies (tokenization libraries, streaming clients, container orchestrators) form a software supply chain. A vulnerability in an open source library can propagate risk to the core of the LLM system.

Supply chain analysis using Software Composition Analysis (SCA) tools automatically identifies vulnerable or outdated components. Integrated into the CI/CD pipeline, this step prevents introducing flaws that conventional tests might miss.

In addition, regular license reviews and update policies minimize the risk of abandoned dependencies. Teams must ensure that third-party vendors remain active and that security patches are delivered in a timely manner.

Safeguards and Good Governance: Building a Reliable Posture

An LLM security strategy relies on rigorous technical controls and dedicated governance. Regular reviews, component isolation, and human validation ensure a controlled deployment.

Example: A Swiss public-sector organization conducted red teaming exercises on an internal AI assistant and isolated its vector index within a private network. This initiative uncovered multiple prompt injection vectors and demonstrated the value of strict flow separation in dramatically reducing the attack surface.

Strict Separation of Instructions and Data

Separating prompt code (instructions) from business data (corpora, vectors) prevents cross-contamination. Processing pipelines must isolate these two domains and allow only an encrypted, validated channel for prompt transmission.

A two-phase approach—preprocessing prompts in a demilitarized environment, then executing in a secure sandbox—limits injection risks and ensures no external active instruction directly contacts the model.

This separation also facilitates security audits. Experts can independently review instructions and data to validate compliance without interfering with business logic.

Permission Limitation and Observability

Applying least privilege to every component—models, agents, connectors—prevents the AI from exceeding its prerogatives. Service accounts for LLMs should be restricted to the bare minimum access needed to perform their tasks.

A centralized observability infrastructure continuously collects performance, usage, and security metrics. Dedicated dashboards for LLMs enable visualization of query patterns, data volumes processed, and intrusion attempts.

Correlating application and infrastructure logs facilitates real-time attack detection. An alerting engine configured on these events triggers automatic or semi-automatic remediation procedures.

Red Teaming and AI Governance

Red teaming exercises simulate attacks to evaluate the effectiveness of safeguards. They target processes, pipelines, and user interfaces to uncover operational or organizational weaknesses.

Formal AI governance defines roles and responsibilities: steering committee, security officers, data stewards, and business liaisons. Each new LLM use case undergoes a joint review by these stakeholders.

Security performance indicators (KPIs)—number of incidents detected, mean response time, percentage of blocked queries—measure the maturity of the AI posture and guide action plans.

From Risky LLM Use to Secure Advantage

LLM security should be viewed as a cross-functional project involving architecture, data, development, and governance. Identifying risks—prompt injection, data leakage, autonomous agents, resource overconsumption, supply chain—constitutes the first step toward a controlled implementation.

By applying best practices in data and instruction separation, minimal permissions, advanced observability, red teaming, and formal governance, organizations can fully leverage LLMs while minimizing the attack surface. This technical and organizational foundation ensures an evolving, secure deployment aligned with business objectives.

Our Edana experts are at your disposal to co-develop an LLM security strategy tailored to your context and goals. Together, we will establish the technical safeguards and governance processes needed to turn these risks into a true lever for performance and innovation.

Views: 117

Views: 117