Summary – Under pressure to reduce costs and risks, accelerate workflows and secure AI, businesses must move beyond the testing phase and target tangible ROI. AI agents orchestrating workflows, unified multimodal models, edge AI for latency and privacy, and strengthened governance are the key trends that set operational deployments apart. Solution: build a modular open-source platform, integrate cloud/edge via MLOps, structure projects through multidisciplinary committees and optimize energy efficiency in compliance with the AI Act and ISO 42001.

By 2026, artificial intelligence is no longer a mere showcase market—it’s embedded in business processes to deliver measurable gains. Decision-makers prioritize solutions that reduce costs, speed up workflows, mitigate risks, or generate tangible revenue.

This reality is confirmed by the Stanford AI Index 2025, which highlights the growing industrialization of AI in enterprises. Now, four trends are the real test between decorative prototypes and operational solutions: AI agents, multimodal models, the resurgence of edge AI, and the indispensable governance and energy-efficiency dimension.

AI Agents for Automated Workflows

AI agents automate sequences of actions within a controlled framework. They’ve moved from demo to efficient business execution.

These systems provide granular workflow control while remaining under human supervision.

Ability to Automate Complex Tasks

AI agents stand out for orchestrating multiple successive operations without manual intervention. By combining document recognition, API calls, and database updates, they’re now pivotal in critical processes like invoice management or incident tracking.

Designed to operate within precise time windows and under business rules, these agents can—for example—analyze a client report, create a ticket, notify a manager, and trigger approval workflows.

Using open-source, modular frameworks ensures rapid integration into a unified architecture without vendor lock-in—a key Edana principle to maintain scalability and independence. Developers thus build agents that learn from every validated action.

Human Supervision and Safeguards

To ensure compliance and security, each AI agent must operate within a limited and documented scope of actions. Access rights are calibrated so that no critical operation can occur without prior approval.

Execution logs and real-time alerts provide full traceability. In case of an incident, an administrator can pause the workflow, analyze the context, then restart or correct the agent.

This approach is supported by strict internal governance: usage policies, review committees, and regular audits govern the agents’ lifecycle. It’s a sine qua non for defending these initiatives before legal and security departments.

Concrete Example

A Swiss logistics company deployed an AI agent to process supplier deliveries. The agent automatically extracts delivery notes, verifies quantity matches, then alerts quality teams about discrepancies. The result: processing time dropped from 48 hours to 4 hours, and error rates fell by 75%, demonstrating the concrete potential of well-governed, agent-driven orchestration.

Widespread Adoption of Multimodal Models

Multimodal models unify text, image, audio, and video processing on a single AI foundation. They pave the way for cross-functional applications.

This convergence cuts maintenance costs and makes it easier to add new capabilities without deploying multiple separate pipelines.

A Single Foundation for Text and Media

The rise of multimodal architectures now allows a single model to analyze a PDF document, extract figures, and generate an oral summary. This uniformity simplifies integration into reporting or customer-service workflows.

By sharing resources, businesses limit external API calls and reduce their AI ecosystem’s complexity. Developers create a single entry point for various data types, accelerating time-to-market.

The open-source, modular approach permits reusing specialized modules (OCR, object recognition, speech synthesis) while retaining full control over model updates and hosting.

Personalized Interactions

Thanks to multimodal flexibility, support systems now combine image recognition (e.g., a damaged product photo) with text or voice response generation. This personalization boosts satisfaction while maintaining centralized interaction tracking.

Companies fine-tune models contextually to enrich knowledge bases tailored to their industries. These adaptations are increasingly automated within CI/CD pipelines to ensure consistency and quality.

This integration relies on containerized microservices, promoting scalability and traceability.

Edana: strategic digital partner in Switzerland

We support companies and organizations in their digital transformation

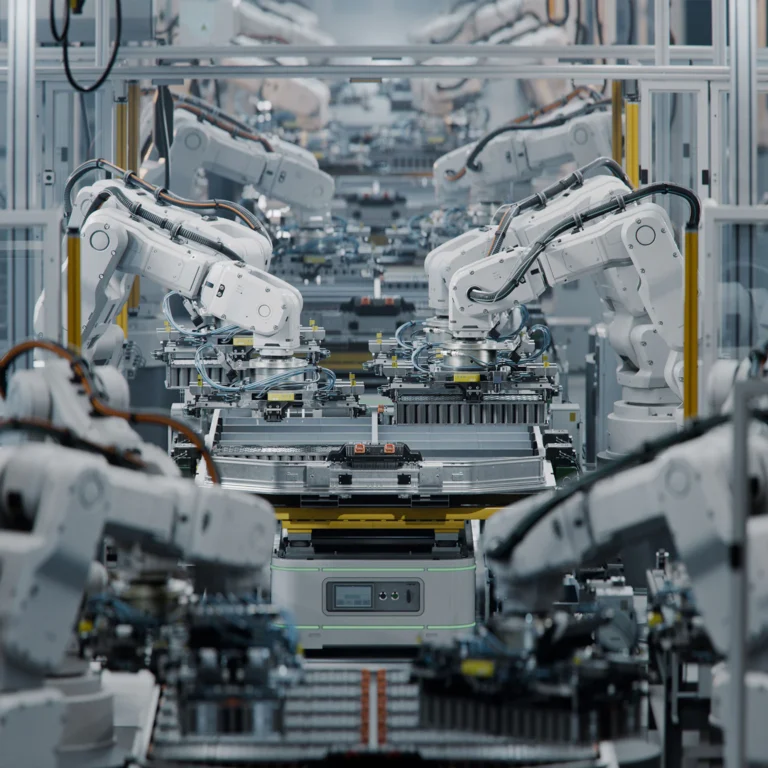

Local Inference with Edge AI

Local inference reduces latency and cuts data transfer. Edge AI is essential for real-time sensitive use cases.

This hybrid cloud/edge approach optimizes costs and enhances data privacy by limiting cloud exchanges.

Latency Reduction

Running inferences directly on embedded devices or edge servers brings response times down to milliseconds—crucial for predictive maintenance, industrial vision, or point-of-sale terminals.

Deploying quantized or pruned models is eased by edge-friendly MLOps pipelines that compress and secure artifacts before transfer.

This proximity boosts performance and ensures a consistent user experience, regardless of network conditions.

Data Optimization and Privacy Protection

By minimizing cloud traffic, edge AI reduces exposure of sensitive data. Critical processing stays on-site, and only aggregated or anonymized results leave the local environment.

This architecture complies with GDPR and the AI Act’s data-minimization requirements. Models remain under company control within its infrastructure, safeguarding privacy.

Combined with model and data-encryption policies, it enhances resilience against interception or data leaks.

Hybrid Cloud/Edge Architecture

Critical applications rely on a central orchestrator that dynamically distributes workloads between cloud and edge based on compute needs and network quality.

Edge microservices are managed via Kubernetes or K3s orchestrators, ensuring portability and scalability across varying volumes and use cases.

This flexibility allows for progressive scaling while minimizing overall energy footprint, in line with Edana’s eco-design strategy.

Concrete Example

An industrial production site in Switzerland deployed smart cameras with edge AI for real-time defect detection on the line. Analyses run locally, triggering immediate corrective actions without waiting for cloud validation. Defect rates dropped by 30% and machine downtime by 20%, illustrating the tangible benefits of local inference.

AI Governance and Energy Efficiency

Compliance with the AI Act, NIST AI RMF, and ISO 42001 has become indispensable for defending AI projects legally and during audits.

At the same time, managing data-center energy costs demands strict trade-offs on model size and infrastructure.

AI Act Compliance and Standard Frameworks

Since February 2025, various transparency and documentation obligations have applied in Europe. From August 2026, the AI Act’s general framework becomes fully operational, with requirements on risk management and impact assessment.

The NIST AI RMF offers a generative AI-specific profile detailing controls for monitoring reliability, bias, and security. ISO/IEC 42001 complements this with AI management system standards.

Adopting these governance frameworks secures audits and demonstrates rigorous oversight to legal and financial stakeholders.

Risk Management and Oversight

AI governance relies on multidisciplinary committees—including IT, business units, compliance, and cybersecurity—to define criticality levels and approve mitigation plans for each use case.

Processes include upfront training-data assessments, robustness testing, and periodic production-performance reviews.

Automated reporting feeds risk dashboards, facilitating decision-making and regulatory compliance.

Energy Optimization and Infrastructure

The International Energy Agency predicts a structural rise in AI-related data-center consumption by 2030. The response involves selecting more compact models and optimizing inference workloads.

Hybrid cloud/edge architectures shift heavy processing to low-carbon energy sites while leveraging local servers for peak compute demands.

Adopting specialized compute units (TPUs, low-power GPUs) and energy-monitoring solutions is a lever to reduce carbon footprint without sacrificing performance.

Concrete Example

A Swiss healthcare facility established an internal framework aligned with the AI Act and ISO 42001 for its medical AI projects. Semi-annual audits confirmed compliance and revealed a 25% reduction in model energy consumption through quantization and cloud/edge orchestration. This initiative strengthened stakeholder trust and controlled energy costs.

AI as a Sustainable Operational Advantage

AI agents, multimodal models, and edge AI deliver measurable gains in costs, speed, and risk—provided they’re underpinned by robust governance and efficient infrastructure. In 2026, AI is judged not by demos but by measurable ROI.

Every project must build on modular, open-source architectures, ensure data quality upfront, and comply with regulatory frameworks and energy goals.

Our experts are ready to help you define a contextualized, secure AI strategy aligned with your business challenges—from design to industrialization.

Views: 137

Views: 137